Documentation Index

Fetch the complete documentation index at: https://docs.anduril.atlas.arenaphysica.com/llms.txt

Use this file to discover all available pages before exploring further.

1. Upload documents

Upload PDFs, schematics, specs, or any reference files that describe your system to the Atlas data catalog. Each upload returns adataset_name — capture it; you’ll pass it to the metagraph update job in the next step.

dataset_name for each uploaded file:

-F "files=@a.pdf" -F "files=@b.pdf", or in separate requests — each comes back with its own dataset_name. The endpoint is synchronous: when it returns, the bytes are in the catalog and ready to use in step 2.

2. Create a metagraph update job

The job only reads the documents you pass to it viacontext_files — it does not scan a system’s prior uploads. Pass the dataset_names from step 1.

uuid from the response:

context_files is the list of dataset_names captured in step 1. If you omit it (or pass an empty list) and don’t supply additional_context, the job completes with no metagraph changes. additional_context is optional — use it to scope or guide the analysis when you have a specific part of the system you want Atlas to model.

3. Start the job run

uuid from the response:

4. Poll for completion

status field will transition through pending → running → completed (or failed). Poll until you see a terminal state.

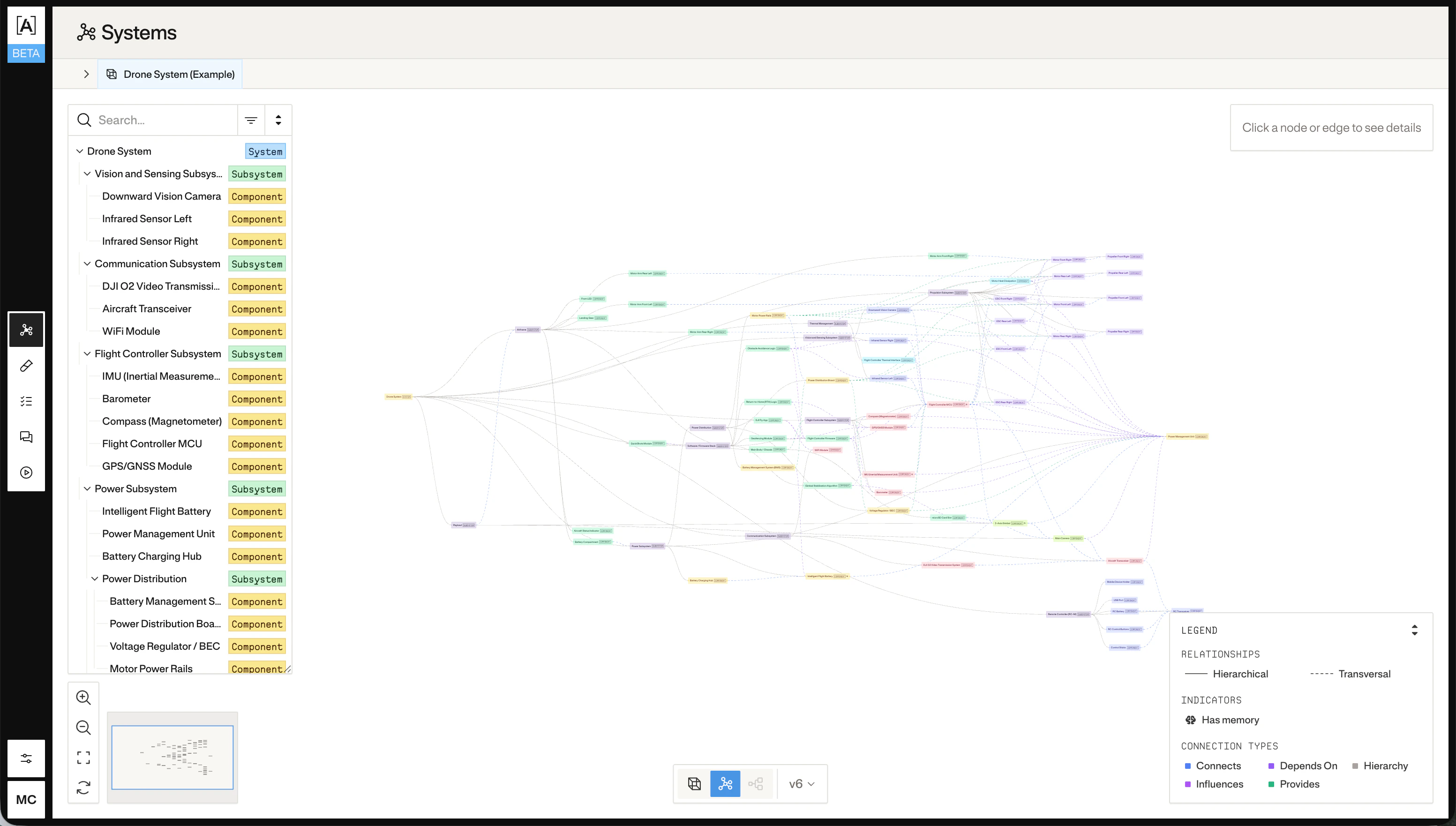

5. Inspect the metagraph

Once the job completes, fetch the result:system_id_or_name in step 2, $SYSTEM_ID is that same value. If you passed a new name to create a system from scratch, read the resulting UUID from the job-run’s preview.metagraph_system_id field:

?version=<n> query parameter to fetch the metagraph as it existed at a specific applied version.